For a long time, the dream of having a robot help you out around the house or handle a complex, messy task in a warehouse has been held back by one thing: the “brain” of the machine. Most robots are brilliant at doing one thing over and over in a perfectly controlled environment, like a car assembly line. But as soon as you change the lighting or move a tool an inch to the left, they get confused. Microsoft is looking to change that with the introduction of Rho-alpha, its first specialized robotics model designed to bring physical AI into the unpredictable real world.

Breaking the chains of the production line

The goal here is simple but ambitious. Microsoft wants to free robots from the rigid scripts of the factory floor. Traditionally, if you wanted a robot to perform a new task, you had to reprogram it from scratch. Rho-alpha is built differently. It is a foundation model, much like the large language models that power the chatbots we use every day, but it is specifically tuned for the physical world.

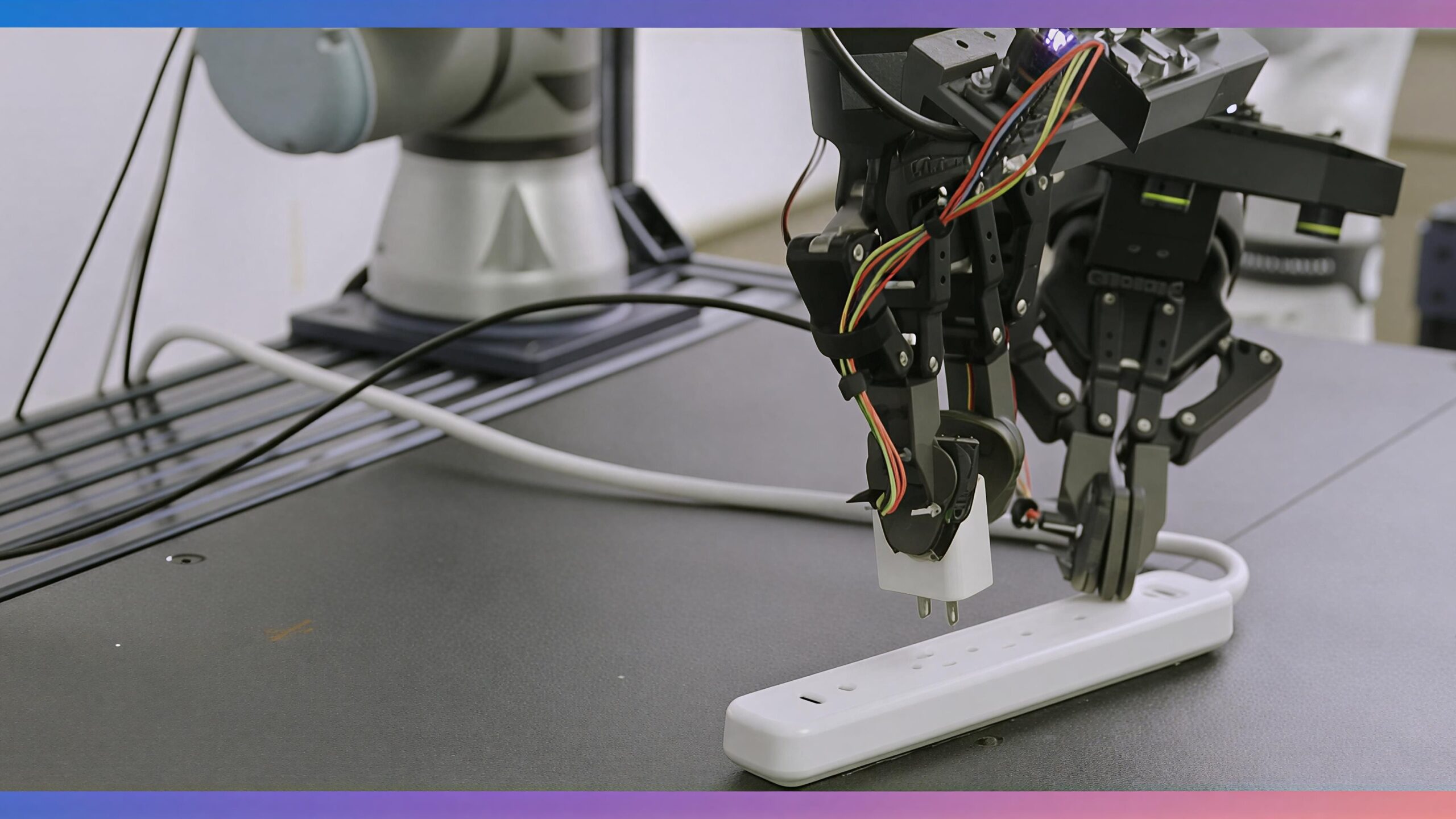

By using what experts call a Vision-Language-Action (VLA) approach, the model allows a robot to take a look at its surroundings, listen to a human command, and figure out the right physical movements to get the job done. This means instead of coding every single arm movement, an operator can eventually just say, “Pick up that red wire,” and the robot will understand what the wire is and how to grab it without snapping it.

More than just sight and sound

What makes this particular release interesting is that Microsoft is calling it a “VLA+” system. The “plus” stands for something we humans take for granted but robots find incredibly difficult: the sense of touch.

Rho-alpha integrates tactile sensing alongside vision and language. If a robot is trying to plug in a connector, seeing it is only half the battle. It needs to feel the resistance and the “click” to know it has succeeded. By adding this layer of sensory feedback, Microsoft is narrowing the gap between digital intelligence and physical execution. This focus on bimanual manipulation, wherein using two arms in coordination, is a massive step toward robots that can handle tools, fold laundry, or assist in complex logistics.

Learning from the digital and the real

One of the biggest hurdles in robotics is that we do not have nearly as much data for physical movement as we do for text. You can scrape the internet for billions of sentences, but you cannot easily scrape the internet for the exact tension needed to turn a screwdriver.

To solve this, Microsoft is using a mix of real-world demonstrations and massive amounts of synthetic data generated in simulation. Using tools like Nvidia Isaac Sim, they can let a virtual robot practice a task thousands of times in a digital world before it ever touches a real-world object. When the robot does make a mistake in the real world, human operators can step in using teleoperation to “teach” it the correct way. This loop of simulation, real-world practice, and human correction is how physical AI becomes smarter over time.

A foundation for the future of industry

This isn’t just a research project for the lab. Microsoft is opening up a Research Early Access Program to let organizations start testing Rho-alpha. The long-term vision is to make these models available through the Microsoft Foundry, allowing companies to use their own data to train robots for their specific needs.

By lowering the barrier to entry, we might soon see a shift where specialized robotics engineering teams aren’t the only ones who can deploy advanced systems. Whether it is a humanoid robot working alongside people in a hospital or a dual-arm system in a retail backroom, the move toward foundational physical AI suggests we are finally moving toward machines that can think and feel their way through the day just like we do.

Release and Availability

Microsoft Rho-alpha is currently available through a Research Early Access Program for select organizations. Plans are in place to bring the model to the broader Microsoft Foundry platform in the future. Pricing for enterprise deployment has not yet been disclosed as the system remains in its evaluation phase.