Instagram parent notification alerts are the latest safety feature from Meta, designed to inform guardians if teens repeatedly search for content related to self-harm or suicide. This update aims to bridge the gap between digital activity and real-world support by giving parents more visibility into potential mental health crises.

Meta has deleted over 544,000 accounts belonging to under-16s in Australia following the implementation of the country's landmark social media ban. Despite this large-scale removal, Meta argues that the crackdown is failing its objectives and is calling for age verification to be handled at the app store level by Apple and Google instead.

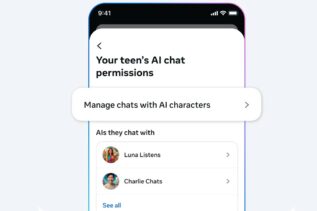

Meta will give parents control over their teens’ AI character chats on Instagram, allowing topic monitoring and a “kill switch” to block AI conversations—responding to safety concerns after inappropriate bot interactions.

Meta’s new AI translation lets creators dub and lip-sync Reels in English, Spanish, Hindi, and Portuguese. Viewers see videos in their preferred language, expanding access and engagement for global content.

Meta is expanding its use of AI-generated characters across its social media platforms, aiming to enhance user engagement. However, experts warn about the potential risks of misinformation and low-quality content.

Polish billionaire Rafal Brzoska and his wife are preparing to take legal action against Meta, the parent company of Facebook...

Instagram, the popular social media platform, has unveiled new features aimed at enhancing user engagement and creativity. These additions include...

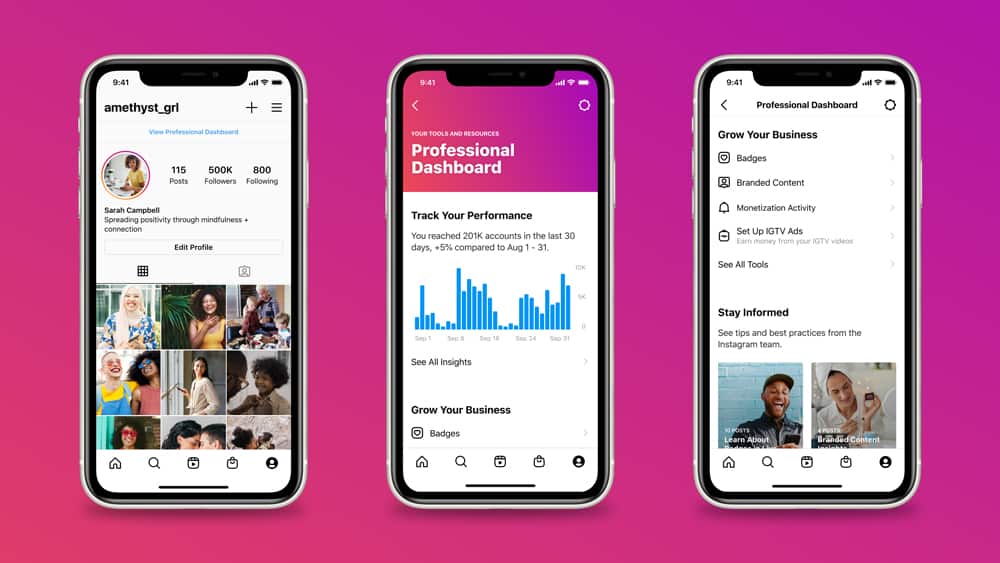

Last January, Instagram launched Professional Dashboard, a central destination of tools and resources for creators and businesses to track their...