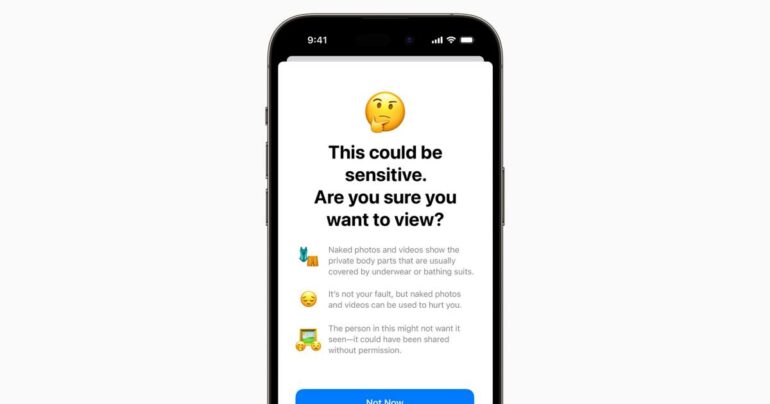

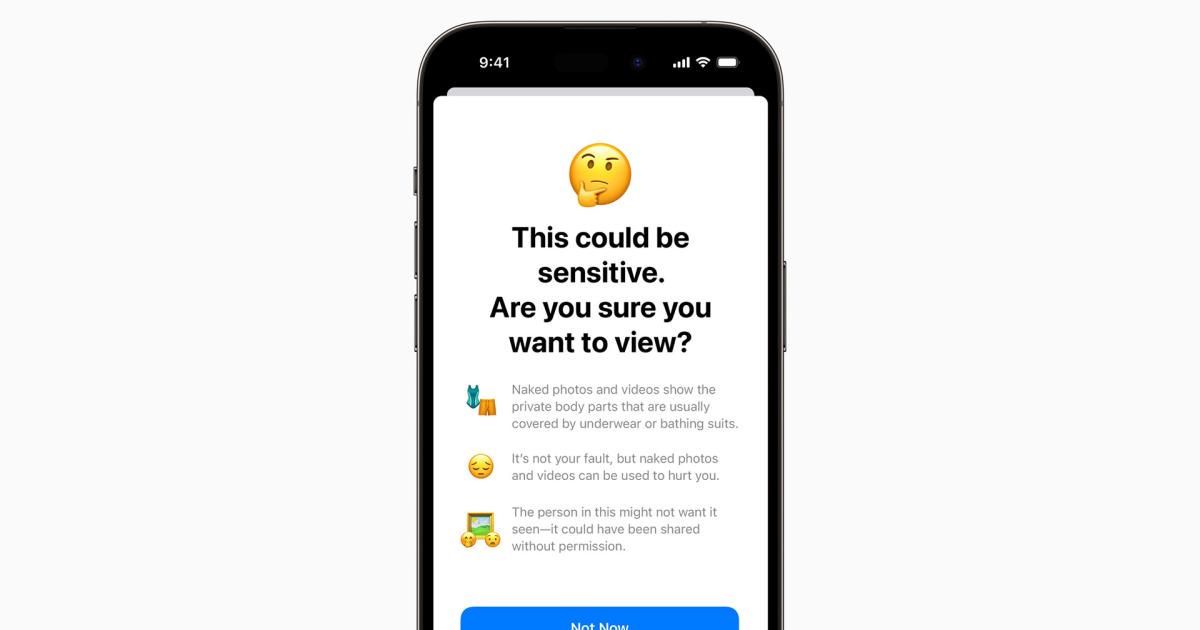

Apple’s iOS 17, the upcoming software update, promises to enhance content sharing capabilities while introducing new safeguards to prevent abuse of these features. One notable addition is the Sensitive Content Warning, designed to help adults avoid unsolicited nude photos and videos. If users receive potentially concerning content, they can choose to decline, view it, or access resources for assistance.

Beyond the Messages app, Apple’s Communication Safety feature extends protection to children. Leveraging machine learning, it can detect and blur sexually explicit material transmitted through AirDrop, Contact Posters, FaceTime messages, and the Photos picker. This technology now includes the ability to recognize videos in addition to still images. In the event of encountering such content, children can reach out to trusted adults for help or find useful resources.

It’s important to note that both the Sensitive Content Warning and Communication Safety processes occur on the device itself. Apple assures users that it does not have access to the sensitive material being processed. To utilize Communication Safety, users must enable Family Sharing and designate certain accounts as belonging to children.

Apple initially unveiled its plans to address unsolicited nudes in 2021, which coincided with its proposal to flag photos uploaded to iCloud that contained known child sexual abuse material (CSAM). However, the company decided to abandon this particular plan by the end of 2022. Concerns arose regarding potential government pressures to scan for other types of images, as well as the risk of false positives. In contrast, the implementation of Communication Safety and Sensitive Content Warning does not encounter these challenges. Their sole purpose is to prevent individuals from traumatizing others.

Legislators have sought to criminalize the dissemination of unwanted nude content, and various services have implemented their own anti-nude detection tools. Apple’s efforts primarily revolve around filling gaps in the existing deterrence system. The aim is to ensure that dubious individuals are less likely to succeed in bombarding iPhone users with inappropriate texts and calls.

By integrating the Sensitive Content Warning feature, iOS 17 empowers users to have more control over their content and protect themselves from unwanted and potentially distressing experiences. The option to decline or view sensitive content, accompanied by resources for assistance, provides users with the ability to make informed decisions and seek help when needed.

Communication Safety further enhances user protection, particularly for children, by employing machine learning to detect and blur sexually explicit material across multiple communication platforms. Recognizing the prevalence of explicit content, Apple’s technology adapts to identify both still images and videos. This ensures that children can reach out to trusted adults or access resources should they encounter such content, fostering a safer digital environment.

Apple’s commitment to on-device processing underscores its dedication to user privacy and security. By conducting these operations locally, Apple ensures that users retain control over their sensitive content and mitigates concerns regarding external access or surveillance. Furthermore, enabling Communication Safety through Family Sharing and child account designation reinforces Apple’s commitment to safeguarding children and providing additional layers of protection for young users.

Apple’s decision to abandon its plan to flag photos containing known CSAM reflects the importance of striking the right balance between privacy, security, and the prevention of abuse. While addressing unsolicited nudes and explicit content remains a priority, Apple acknowledges the complexities and potential risks associated with certain detection methods. By focusing on preventing abuse rather than inadvertently compromising user privacy, Apple ensures that its features align with user expectations while minimizing potential negative consequences.

In summary, Apple’s iOS 17 introduces the Sensitive Content Warning and Communication Safety features to empower users and protect them from abusive and unwanted content. These additions, which operate on-device, offer users greater control and resources to address concerns. By continuously refining its approach, Apple reinforces its commitment to creating a safer digital ecosystem where individuals can enjoy content sharing while minimizing the risk of abuse and trauma.