Tesla has unveiled its substantial investment in a colossal AI compute cluster equipped with 10,000 Nvidia H100 GPUs, purpose-built for accelerating AI workloads. This powerful system, which commenced operations this week, is dedicated to processing the extensive volumes of data collected by Tesla’s vehicle fleet, with the primary goal of expediting the development of fully autonomous vehicles, as revealed by Tim Zaman, the leader of AI infrastructure at Tesla.

For years, Tesla has been steadfastly working toward achieving complete autonomy in its vehicles and has committed over one billion dollars to establish the infrastructure required to realize this vision.

Table of Contents

Tesla Invests Over $300 Million in Massive AI Compute Cluster with 10,000 Nvidia GPUs for Self-Driving Vehicle Development

The announcement came in July 2023 when Tesla CEO Elon Musk disclosed the company’s plan to invest $1 billion in the expansion of its Dojo supercomputer within the coming year. Dojo, developed using Tesla’s proprietary technology, commenced with the introduction of the D1 chip, featuring 354 customized CPU cores. Each training tile module consists of 25 D1 chips, and the foundational Dojo V1 configuration encompasses a total of 53,100 D1 cores.

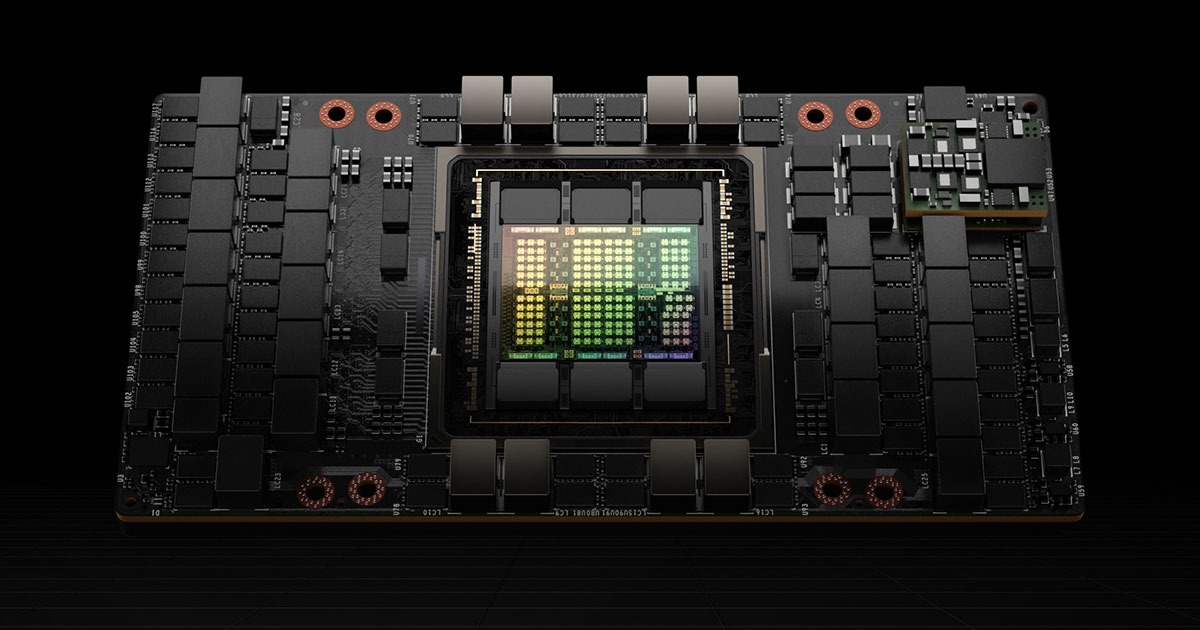

In June 2012, Tesla had previously constructed a compute cluster containing 5,760 Nvidia A100 GPUs. However, the recent investment in 10,000 Nvidia H100 GPUs significantly surpasses the capabilities of this supercomputer.

Valued at over $300 million, this AI cluster is set to deliver a peak performance of 340 FP64 PFLOPS for technical computing and 39.58 INT8 ExaFLOPS for AI applications, according to Tom’s Hardware. Impressively, Tesla’s computing power now rivals that of the Leonardo supercomputer, positioning it among the most formidable computing systems globally.

Nvidia’s GPUs are integral components underpinning numerous leading generative AI platforms worldwide. Beyond their utilization in servers, these GPUs find application in diverse domains, ranging from medical imaging to the generation of weather models.

Tesla aims to harness the immense processing capacity of these GPUs to analyze its extensive dataset more efficiently and effectively. The ultimate objective is to construct a model capable of rivaling human driving capabilities.

While many enterprises typically rely on cloud infrastructure provided by giants like Google and Microsoft, Tesla has opted for an on-premises approach to its supercomputing infrastructure. Consequently, the company is responsible for the maintenance and management of the entire system.