ChatGPT users can now branch conversations, letting them explore multiple ideas or directions from the same starting point without losing their main chat thread—perfect for brainstorming and creative work.

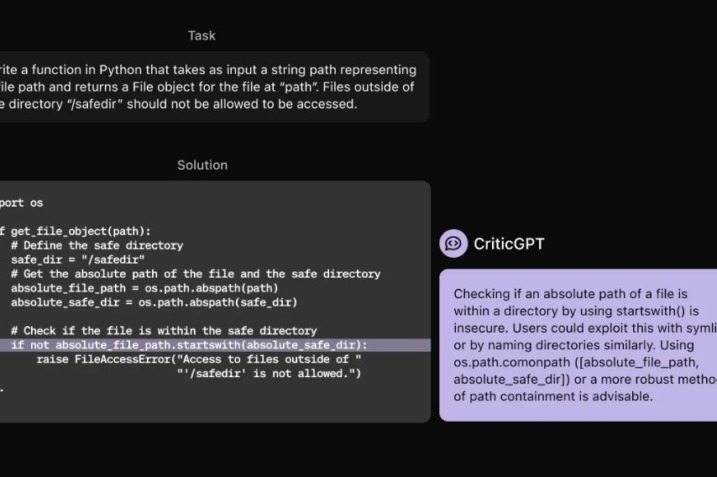

A new study has revealed that artificial intelligence chatbots can be manipulated to break their own rules using simple psychological tactics, such as flattery and peer pressure. This discovery raises critical questions about the safety and reliability of AI systems as they become more deeply embedded in our daily lives.

Learn about Tinder's AI experiment 'The Game Game' on iOS. Practice flirting and conversation skills with AI characters in simulated scenarios.

OpenAI just dropped OpenAI Academy, a treasure trove of AI learning materials that might finally give you the nudge to...

One of the only things that is stopping AI from truly blowing up, is copyright laws. Agencies across industries have...

OpenAI has formally urged the U.S. government to ban Chinese AI company DeepSeek from use in federal, military, and intelligence...

Independent security researcher Bill Demirkapi has uncovered a significant amount of sensitive corporate data left exposed online. Since late 2021,...

Nvidia has seen the greatest gains from the recent AI surge, recently becoming the third most valuable company globally, surpassing...