A Google Gemini security flaw has recently been uncovered, revealing how hackers could use simple calendar invites to trick the AI into leaking private user data through a clever Indirect Prompt Injection attack.

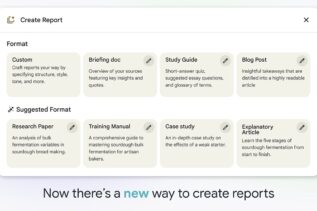

Google’s latest update to NotebookLM gives it a million-token memory and a new “Goals” feature that lets users define how the AI behaves, turning it from a research tool into a genuine collaborator that remembers context and adapts to your workflow.