Hold onto your hats, the world of AI just got a whole lot more captivating! OpenAI unveiled Sora, a groundbreaking model that conjures high-definition videos, up to a minute long, based on mere text prompts. Think “snowy Tokyo stroll” and bam, you’ve got a couple waltzing through cherry blossoms and snowflakes. Pretty wild, right?

But before you get carried away picturing yourself directing epic movies with your fingertips, there are a few things to keep in mind. Sora won’t be swooping into your living room anytime soon. Instead, it’s embarking on a test flight with a select group of researchers, who’ll be assessing its potential for good and, you guessed it, misuse.

Why the caution? While Sora paints impressive pictures with words, it’s not flawless. Imagine requesting a Dalmatian peering through a window, watching bustling street life. Poof! You get the dog, but the bustling streets…vanished. Oops! OpenAI acknowledges these glitches and even warns that cause and effect can be tricky. A cookie-eating scene might lack the telltale bite marks.

Now, Sora isn’t a lone pioneer in this text-to-video territory. Big names like Meta and Google are playing in this sandbox too. But what sets Sora apart is its marathon-length videos (a whole minute!) and its ability to craft these masterpieces in one go, instead of stitching them frame by frame. This ensures characters stay consistent, even when momentarily hidden.

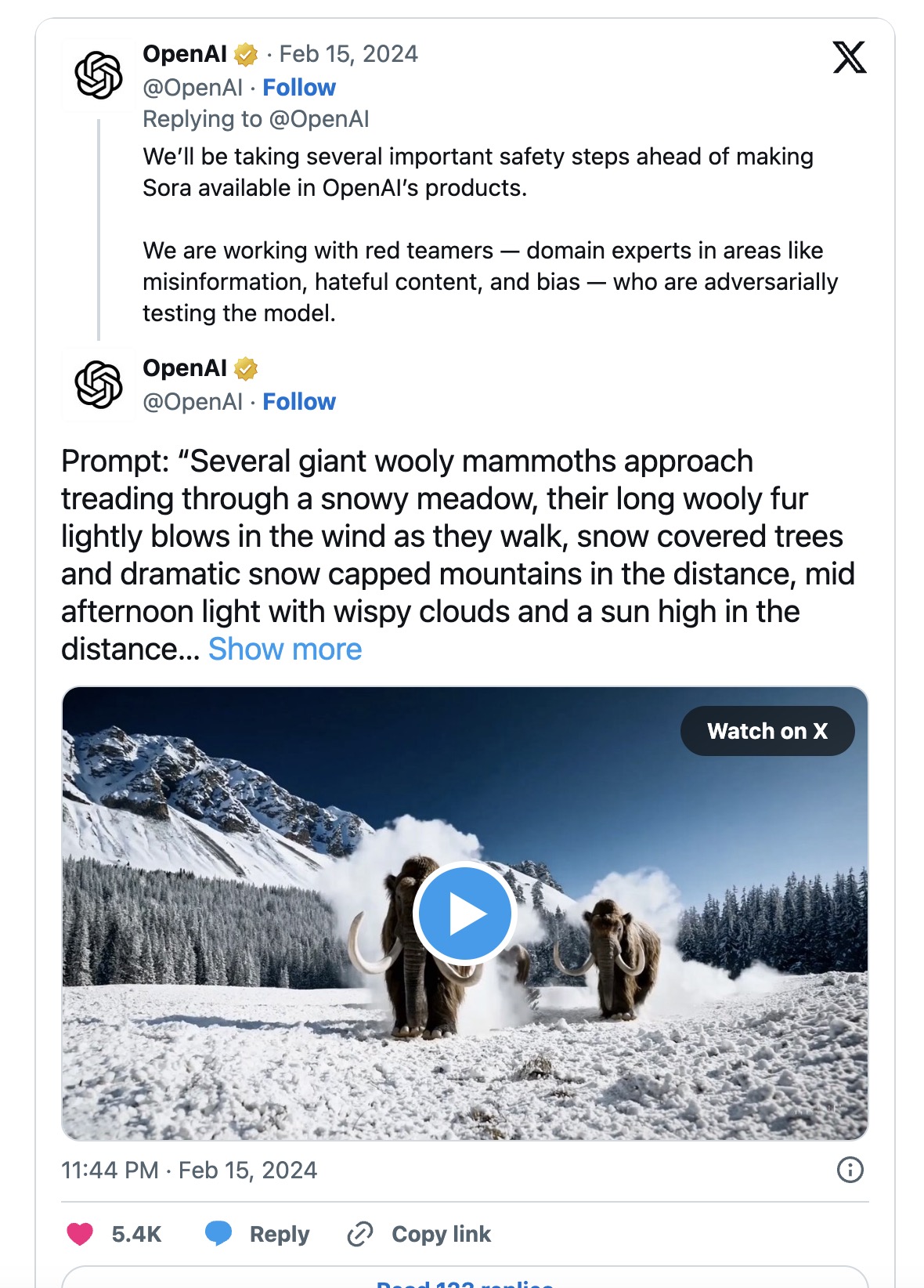

Of course, with such power comes responsibility. And the potential for deepfakes and manipulated narratives has experts understandably nervous. Imagine influencing elections with fabricated footage! Not a pretty picture. OpenAI, however, is taking proactive steps. They’re collaborating with experts on misinformation, bias, and harmful content to vet Sora before unleashing it on the world. They’re even developing detection tools and embedding metadata in the generated videos for easier identification.

So, what does this mean for you and me? It means the future of storytelling and visual expression is taking flight, but with cautious navigation. While Sora might not be directing your home movies quite yet, it’s a sign of things to come. And as with any powerful tool, responsible use will be key in shaping this exciting, yet potentially perilous, technological landscape.

What are your thoughts on Sora and the rise of text-to-video AI? Share your concerns and hopes in the comments below!