In recent months, the age of enterprise AI has descended upon us. Since ChatGPT’s launch in November of last year, the public’s obsession with it has sparked corporate interest in a flood and sparked an industry-wide land grab as every major tech company competes to stake their claim in this burgeoning market by integrating generative AI features into their current products. Heavyweights like Google, Microsoft, Meta, and Baidu are already competing for market supremacy with their Large Language Models (LLMs), while everyone else—from Adobe and AT&T to BMW and BYD—is frantically trying to find applications for the ground-breaking technology.

With the help of NVIDIA’s newest cloud service, AI Foundations, companies without the resources or time to build their own models from scratch will be able to “build, refine, and operate custom large language models and generative AI models that are trained with their own proprietary data and created for their unique domain-specific tasks.”

NeMo, one of NVIDIA’s language models, BioNemo, a fork of NeMo created for the medical research community that focuses on drug and molecule discovery, and Picasso, an AI that can produce images, video, and “3D applications… to supercharge productivity for creativity, design, and digital simulation” are among the models. Despite Tuesday’s revelation, Picasso is still in private preview and both flavours of NeMo are still in early access, so it will be some time before any of them are made available to the general public. Both NeMo and Picasso run on the brand-new DGX Cloud platform from NVIDIA and will eventually be accessed online.

These cloud-based business services provide as empty templates that businesses can fill with their own databases to train on particularly. Since NVIDIA’s AIs allow businesses to customise a similarly-styled LLM to their own specific needs using their own proprietary data, think of ChatGPT but exclusively for one Pharma company’s research division. This is in contrast to something like Google’s Bard AI, which is trained on (and will draw from) data from all over the internet to provide a generated response. More than three times as many parameters as GPT-3.5’s 185 billion can be used to train the models, ranging from 8 billion to 530 billion.

Imagine StableDiffusion being used to train on real Getty Images with Getty’s consent. In a press statement on Tuesday, NVIDIA described a system based on the NeMo cloud service that consists of a number of ethically sourced text-to-image and text-to-video models that have been “trained on Getty Images’ fully licenced assets.” “Getty Images will pay artists royalties on any sales made using the models.”

The same technical foundations as NeMo are used by BioNeMo, which is only focused on medication and compound discovery. Bio NeMo “allows researchers to fine-tune generative AI applications on their own proprietary data, and to run AI model inference directly in a web browser or through new cloud APIs that easily integrate into existing applications,” according to a release on Tuesday.

With BioNeMo, we can pre-train large language models for molecular biology on Amgen’s proprietary data, enabling us to explore and develop therapeutic proteins for the upcoming generation of medicines that will benefit patients, according to Peter Grandsard, executive director of Biologics Therapeutic Discovery at Amgen.

Six models, including DeepMind’s AlphaFold2, Meta AI’s ESM2 and ESMFold, DiffDock, and MoFlow, will be made available at launch.According to the companies, utilising AI-based predictive models helped cut the time to train “five custom models for molecule screening and optimisation” using Amgen’s proprietary data on antibodies from the customary three months down to four weeks.

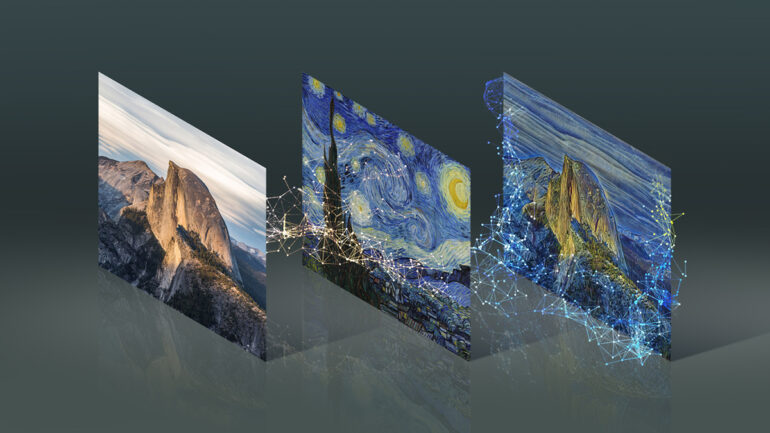

The photography website will employ Picasso to create 3D things from text prompts as a new tool within Creative Flow, with plans to make it available on Turbosquid.com and NVIDIA’s upcoming Omniverse platform, according to a similar agreement that NVIDIA announced with Shutterstock.

“Our generative 3D partnership with NVIDIA will power the next generation of 3D contributor tools, greatly reducing the time it takes to create beautifully textured and structured 3D models,” Shutterstock CEO Paul Hennessy said in a statement. “This first of its kind partnership furthers our strategy of leveraging Shutterstock’s massive pool of metadata to bring new products, tools, and content to market.

As part of Adobe’s Content Authenticity Initiative, which aims to increase transparency and accountability within the generative AI training process, NVIDIA is also collaborating with the latter company. The CAI’s proposals include a “do not train” list, similar to robot.txt but for images and multimodal content, and persistent origination tags that will detail whether a piece is AI generated and from where.