Alright, hold onto your hats because AMD just unleashed the MI300A, their latest tech wizardry that’s about to give Nvidia a serious run for its money. This bad boy, built on the Zen 4 architecture, is not your everyday processing unit – it’s an accelerated processing unit (APU) designed to rock the world of data centers, AI, and high-performance computing (HPC) workloads.

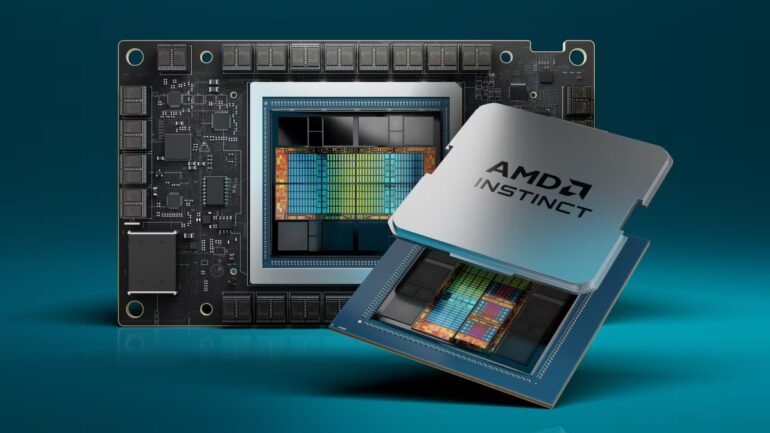

Imagine this beauty flexing 24 threaded CPU cores and a whopping 228 CDNA 3 compute units for that GPU magic. And it’s not just about the raw power – the MI300A is packing a unified 128GB high-bandwidth memory (HBM) that spans both CPU and GPU. No more separate memory units hogging the spotlight like in the old days. And the design? Picture 13 chiplets doing a dance in a 3.5D packaging method – the largest chip AMD has ever thrown into the ring, boasting a jaw-dropping 153 billion transistors. Plus, it’s got a 256MB Infinity Cache at its core, working its magic to turbocharge bandwidth and slash latency for data on the move.

But hey, that’s not the only rockstar at the party. AMD also dropped the MI300X AI accelerator, a beast with eight stacks of HBM3 memory and eight 5nm CDNA 3 GPU chiplets. They’re not just here to play; they’re here to go toe-to-toe with the big guns.

Now, AMD is aiming to take Nvidia’s crown, challenging chips like the H100 GPU and GH200. And guess what? The MI300A isn’t just talking the talk – test results are turning heads. We’re talking twice the theoretical peak HPC performance compared to the H100 SMX, four times the performance in certain workloads, and twice the peak performance per watt versus the GH200. And when it comes to AI, it’s locking horns or just slightly falling short of what Nvidia’s H100 can throw down.

Who’s in the AMD camp? We’ve got heavyweights like HPE, Eviden Gigabyte, and Supermicro on board. But the real showstopper? The El Capitan supercomputer, gearing up to be the world’s first two-exaflop beast when it roars to life next year. Get ready for a wild ride into the future of computing, folks!